City

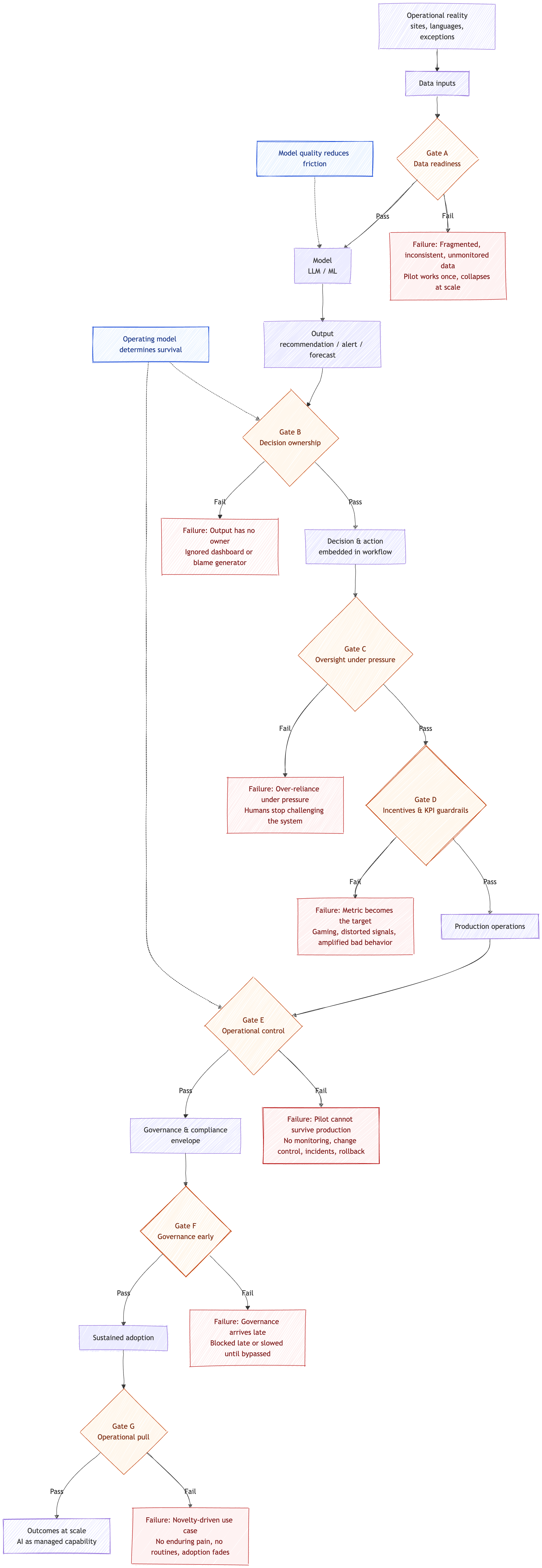

You will often hear that the next generation of models will fix accuracy, reliability, and adoption all at once. In practice, that promise is usually a sales pitch and a form of magical thinking. Many of the problems that kill operational AI use cases are not technical problems to begin with. They are problems of decision ownership, process design, incentives, data readiness, and operational control. Better models can reduce friction, but they do not repair a broken system that surrounds the model.

This matters because operations are where AI meets reality. Multiple sites, different languages, varying data maturity, strong safety and compliance expectations, and a long tail of exceptions. When AI fails here, it rarely fails because the model is slightly worse than the vendor claimed. It fails because the organization cannot reliably turn outputs into decisions and actions, cannot feed the system with trustworthy inputs over time, and cannot govern how humans use the system under pressure.

Below are the most common operational failure patterns that no model upgrade will solve. Each one is a management and operating model issue first.

The output has no owner

The AI produces recommendations, alerts, or forecasts, but nobody clearly owns the decision, the exceptions, or the outcome. The output becomes another dashboard that people glance at and ignore, or worse, it becomes a source of arguments when something goes wrong.

Accountability does not emerge from accuracy. If the organization has not defined decision rights and responsibility, the best model in the world will still produce orphaned outputs. Human roles and responsibilities are a core risk factor in real deployments.

Treat every operational AI use case as a decision system. Assign a single accountable operational owner, define who can override, who reviews exceptions, and what is logged for learning and audit.

Data exists, but it is unusable

You have data, but it is fragmented across sites, suppliers, ERPs, and local workarounds. Labels are inconsistent, lineage is unclear, and data quality is not monitored. The pilot works in one plant or one country, then collapses when scaled.

Models do not invent missing operational reality. If the data does not represent real conditions, including errors, outliers, and local variation, improvements in modeling will hit a ceiling quickly. Building AI-ready data is a continuous practice, not a one-time extraction.

Invest in data readiness as an operating capability, build metadata discipline, data observability, governance, and ongoing improvement tied to the highest-value workflows. If data issues exist, treat them as blockers, not as noise the model will learn.

The pilot cannot survive production conditions

A prototype demonstrates promise, then fails once deployed into real operational cadence. Shift changes, seasonality, language variation, supplier disruptions, and the daily chaos of exceptions. Performance drifts, confidence drops, and the use case quietly dies.

Production failures are usually lifecycle failures like missing monitoring, weak change control, no incident response, and no rollback plan. Model quality is rarely the limiting factor once the system meets the messiness of operations.

Run the use case like a managed service. Require logging, monitoring, alerting, incident handling, and rollback as part of the definition of done. If it is operationally critical, it must be controlled.

The metric becomes the target

The AI optimizes a KPI such as utilization, throughput, on-time delivery, or average handling time. People respond by gaming the metric, shifting work, redefining categories, or pushing risk elsewhere. The AI then amplifies that behavior at scale because it rely on the very signals being distorted.

If incentives and metrics are misaligned, accuracy improvements only help the organization optimize the wrong thing faster. This is a management system problem, not a modeling problem.

Use counter-balancing measures and explicit constraints, including what must not worsen like quality escapes, safety incidents, rework, customer harm, employee fatigue. Review incentives as part of AI governance, not as an afterthought.

Over-reliance under pressure

In the control room, the contact center, the dispatch function, or the planning team, people start treating the AI as an authority. Under time pressure, they stop challenging it. Errors become systemic because humans stop being a real check.

This is a human-system interaction risk. Even if the model improves, organizations still face the tendency toward automatic reliance and over-reliance, especially when output looks confident. In EU, expectations for human oversight increasingly assume the ability to monitor, interpret, override, and stop for higher-risk systems.

Design for intervention. Make uncertainty visible, define thresholds that trigger mandatory review, and ensure clear override and stop procedures for high-impact actions. Oversight must be practical in the workflow, not theoretical in policy.

Governance arrives late and kills the use case

A use case looks compelling, then gets blocked late by risk, privacy, compliance, or internal audit. Or it survives only after so many controls are bolted on that it becomes slow and unusable, so the business bypasses it.

Legal and liability constraints define feasibility for many operational decisions. You cannot solve governance obligations with accuracy. In EU, for higher-risk contexts, oversight, traceability, and control expectations shape what is viable.

Match use cases to governance maturity. Start with workflows where controls are realistic, and design oversight and documentation in from the start for higher-risk areas.

The use case is driven by novelty, not operational pull

The initiative is framed as we must do something with AI, rather than as a commitment to solve a persistent operational pain point. People cooperate during the pilot, then move on because it never becomes essential to daily work.

If the problem is not enduring and the users do not feel the pain, model improvements do not create adoption. Focusing on technology rather than the operational problem is a repeatable failure pattern.

Fund enduring operational problems and commit long enough to embed new routines. Treat adoption as process change, not as model rollout.

Operational AI Survival Pipeline (Decision System Gates)

A pre-mortem you can require before funding

Before approving an operational AI use case, require answers:

Decision and action: What decision changes, who owns it, and what action follows the output?

Value and tolerance: What is the cost of being wrong, and what error is acceptable?

Data readiness: Is the data representative, governed, and monitored across sites and time?

Oversight: How do you prevent over-reliance, and how can humans override or stop when needed?

Incentives: Will the KPI be gamed once it becomes a target, and what guardrails prevent distortion?

Operational control: How do you monitor performance, handle incidents, roll back changes, and manage vendor change?

If these cannot be answered crisply, the organization is not arguing about AI. It is arguing about hope.

Operational AI is not a model race. It is an operating model decision. The enterprises that win will treat AI as a managed capability. Clear decision ownership, data readiness as a practice, incentives that do not invite gaming, and oversight that enables humans to intervene. Until those foundations exist, better models will fix it is not a strategy. It is a way to avoid the hard work.